Scams at scale: Cybercrime, state actors, and the financial system

Organized scams have evolved into a structured, cross-border industry that extracts billions from the US economy each year. What once looked like isolated fraud cases now resembles a coordinated financial supply chain. Victims are sourced, groomed, exploited, and the proceeds are moved through regulated institutions with speed and intent.

The human impact is immediate and personal. Retirement savings wiped out, small businesses destabilized, families left financially exposed. The systemic implications are broader and more uncomfortable.

In fact, according to the FBI’s Internet Crime Complaint Center (IC3), reported cyber-enabled fraud losses in the US exceed $16 billion, with investment scams alone accounting for over $4 billion of that total. The Federal Trade Commission reports similarly sharp increases. These figures capture only reported cases. Underreporting remains substantial, meaning the true economic cost is almost certainly higher.

So, we know scams are increasing. But are the financial systems structured to disrupt them, and at scale?

| This article covers: 🟣Reported US cyber-enabled fraud losses now exceed $12 billion annually, with real losses likely significantly higher. 🟣Modern scams operate as organized enterprises with defined roles, technology stacks, and cross-border logistics. 🟣Generative AI tools are lowering the cost of deception and increasing operational efficiency. 🟣Mule accounts convert digital fraud into regulated financial flows. 🟣In some jurisdictions, limited enforcement enables organized scam ecosystems to persist. 🟣Regulators increasingly expect earlier, system-level disruption rather than post-event reporting. |

The industrialization of online scams in the United States

Today’s scam networks resemble structured enterprises. There are teams responsible for initial outreach, others focused on social engineering, and others tasked with payment processing and fund movement. In some regions, law enforcement and investigative reporting have documented large compounds housing hundreds of operators running investment and romance scams with scripted playbooks.

But digging into research reports and enforcement actions reveals that this is not limited to one geography. Business email compromise schemes, ransomware operations, and large-scale investment fraud networks often function with defined hierarchies and affiliate models. Ransomware groups, for example, commonly operate on a “ransomware-as-a-service” basis, providing infrastructure to affiliates in exchange for a share of proceeds. That model mirrors legitimate software distribution.

Generative AI has accelerated this industrialization. Fraudsters can now produce tailored phishing emails, realistic voice clones, and multilingual scripts at scale, significantly lowering the barrier to convincing deception. The cost of producing credible fraudulent content has dropped, while potential returns remain high. Scam pages created via generative AI quadrupled globally from May 2024 to April 2025, producing over 38,000 new scam pages per day.

According to the UN Office on Drugs and Crime, “cyber-enabled fraud operations in Southeast Asia have taken on industrial proportions.” One UNODC author told ProPublica: “Banks have never been targeted at this scale, in these ways.”

As a result, scams are structured, repeatable extraction mechanisms.

It’s important to also note, according to the UN Human Rights Office, hundreds of thousands of people have been trafficked and are trapped in scam centers in Cambodia and Myanmar, with other operations run from Laos, the Philippines and Thailand.

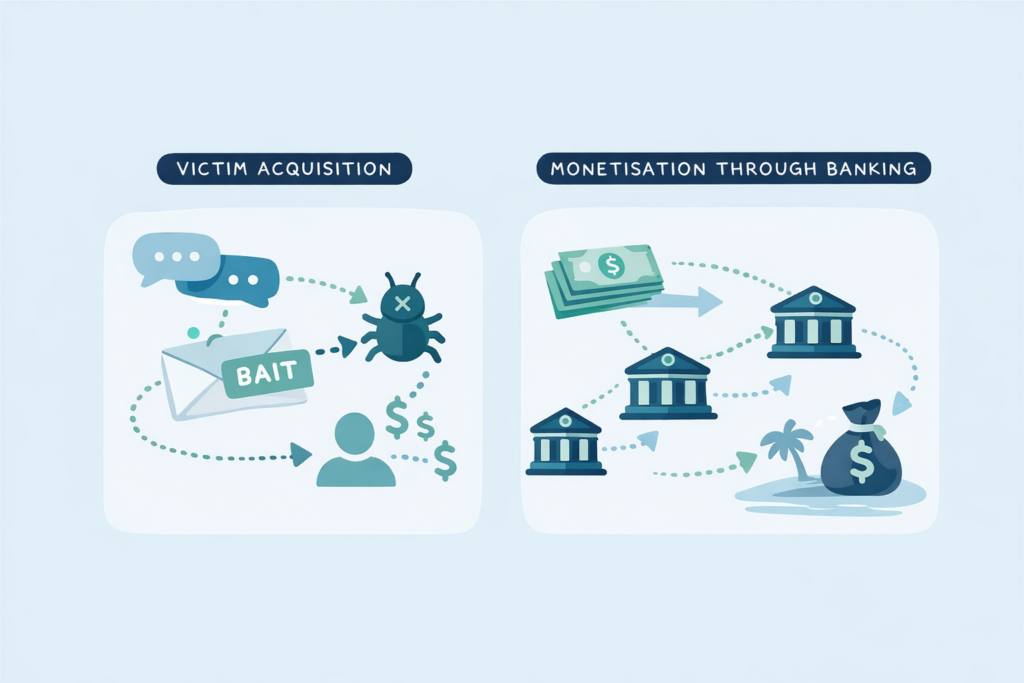

From victim acquisition to financial system monetization

To understand why this has become a system-level issue, it helps to follow the lifecycle.

The first stage is victim acquisition. This may involve phishing, impersonation of trusted institutions, social grooming through dating platforms, or malware that captures credentials. Increasingly, these approaches are combined. A victim may first encounter a scam through social media, then be directed to a fraudulent investment platform, and later be persuaded to transfer funds through what appears to be a legitimate banking channel.

The second stage is extraction. Investment scams now represent the largest category of reported losses in the US, according to IC3 data. Business email compromise remains a persistent threat to corporate treasuries, with fraudsters manipulating payment instructions and vendor communications. Ransomware adds another dimension, where organisations are coerced into payment under threat of operational disruption or data exposure.

The third stage (and the one most relevant to banks) is monetization.

The scam begins in cyberspace. It becomes economically real when funds enter the regulated financial system. That is the conversion point where digital deception turns into banked money.

You can see the problem here. Each institution may detect unusual activity within its own accounts, yet the broader enterprise often spans multiple banks, jurisdictions, and payment rails.

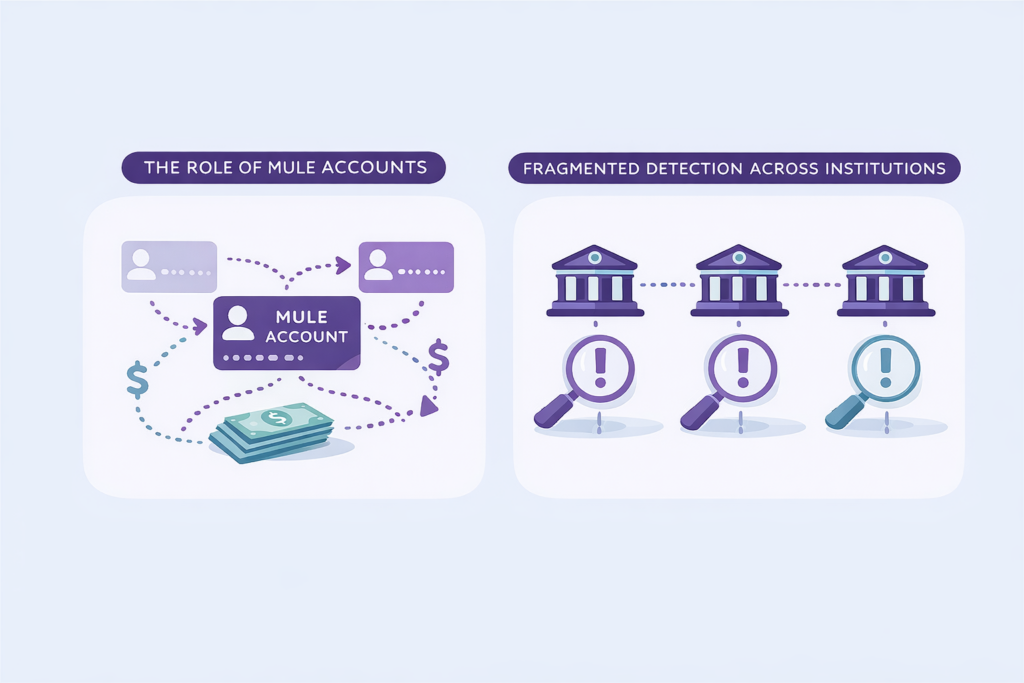

The role of mule accounts in moving criminal proceeds

Mule accounts are central to this monetization process.

Funds are routed through individuals recruited via job advertisements, social media outreach, or direct solicitation. Some recruits understand the illegality; others are misled into believing they are performing legitimate work. Either way, their accounts become transit points for criminal proceeds.

Here are two examples.

- A student responds to an online advert offering easy income for “payment processing” and moves substantial sums through newly opened accounts across several institutions within days.

- An individual facing financial hardship is persuaded to open multiple accounts and transfer incoming funds onward in exchange for a small commission.

Transfers are often structured to avoid obvious triggers and executed with speed to reduce the chance of intervention. Meanwhile, funds may be consolidated offshore or converted into cryptoassets before re-entering the traditional financial system elsewhere.

No single bank sees the entire chain. Organized networks rely on that fragmentation.

State-tolerated cybercrime and the geopolitical dimension

This brings us to a more contentious issue.

In certain jurisdictions, large-scale scam and ransomware operations have persisted for years with limited disruption. Economic incentives, corruption, or selective enforcement can reduce the operating risk for organized groups. In some instances, overlaps have been observed between cybercriminal networks and actors aligned with broader national interests.

That does not imply every scam is state-sponsored. But it does mean that in parts of the world, the environment allows organized cybercrime ecosystems to mature and scale. Ransomware groups openly recruit affiliates. Scam networks operate with infrastructure that suggests long-term continuity rather than short-lived operations.

Financial fraud increasingly intersects with national security considerations. Proceeds from organized scams do not exist in isolation; they can be reinvested into further criminal activity or intersect with sanctions evasion and other destabilizing conduct.

Scams at scale therefore extend beyond consumer protection. They touch financial resilience and strategic stability.

Why traditional AML detection is struggling to keep pace

Financial institutions have invested heavily in transaction monitoring, customer due diligence, and suspicious activity reporting. They’ve usually taken a bunch of steps to strengthen onboarding controls, improve typology development, and refine escalation processes.

But it’s not enough, and we need to do more.

Organized scam networks are distributed by design. Mule accounts are opened across institutions. Transfers move rapidly between banks. Even when a single institution identifies suspicious activity and files a SAR, funds may already have traversed several entities.

And even when you’ve made upgrades internally, including robust monitoring, rapid escalation, and detailed reporting, the broader network often remains intact.

Regulators are increasingly focused on earlier disruption, particularly in areas such as authorized push payment fraud and mule detection. The expectation is moving beyond documenting suspicious flows toward preventing them.

Needless to say, institutions must also manage false positives carefully. Excessive friction undermines customer experience and trust. The challenge is to widen coverage without overwhelming operations.

A system-level response to organized scam networks

Take this a step further and the conclusion becomes difficult to avoid.

Scams operate as coordinated networks. Financial institutions largely detect risk in isolation. That structural mismatch creates opportunity for organized groups to scale.

If monetization depends on regulated infrastructure, that infrastructure becomes the logical intervention point. The next thing I’m thinking about is not more rules, but better coordination of intelligence mechanisms that allow institutions to identify shared risk indicators without centralizing sensitive customer data.

Then, and only then, are you ready to move from reactive reporting to proactive disruption.

The human impact of scams at scale is already visible in the billions lost and the lives disrupted. The systemic implications are unfolding more gradually, but no less seriously. As organized cybercrime continues to industrialise, the financial system will be judged not only on how well it reports suspicious activity, but on how effectively it prevents organized extraction at scale.

The good news is that Consilient enables banks to identify cross-institution financial crime networks without sharing sensitive customer data. By applying federated learning across institutions, banks can detect mule activity and organized scam flows earlier, reducing losses, lowering false positives, and strengthening system-wide resilience.

If you are rethinking how your institution approaches organized scam risk, we should talk.Speak to our team about collaborative detection at scale.